A/B Testing for Email: When Small Changes Reveal Big Signals

The Subject Line That Surprised Me

I once sent out an email campaign that I was genuinely proud of. The design was polished, the copy carefully written, and the offer clear. A few days later, the open rate was… average. Not terrible, but nowhere near what I expected. Out of curiosity more than strategy, I resent the same email to a small segment with a slightly different subject line — just a subtle shift in tone from “Limited Offer Inside” to “A Small Thank-You for You.”

The difference wasn’t explosive, but it was meaningful. The second version quietly outperformed the first. That moment changed how I looked at email. It wasn’t about redesigning everything; it was about understanding how small language choices could influence human response. A/B testing stopped feeling like a technical switch in the platform and started feeling like listening more carefully.

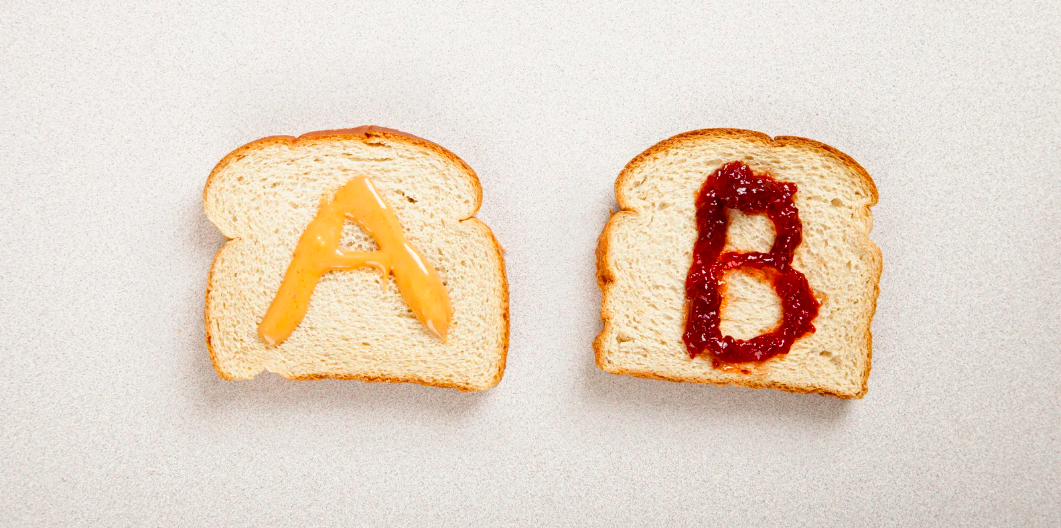

Two Versions, One Question

At its core, A/B testing is simple: create two variations, send each to a portion of your audience, observe which performs better, and let the stronger version reach the rest. But the simplicity hides depth. The real power comes from what you choose to test.

Sometimes it’s the subject line — curiosity versus clarity. Other times it’s the call-to-action button — “Shop Now” versus “Explore Collection.” Even preview text, header imagery, or send time can shift results. Each variation becomes less about proving one design “right” and more about discovering which tone or structure resonates with the audience at that moment.

Where Numbers Meet Psychology

What makes email A/B testing fascinating is that the metrics are numerical, but the reactions are emotional. Open rate reflects curiosity. Click-through rate reflects interest. Conversion rate reflects commitment. Behind each percentage is a split-second decision made by a real person scanning their inbox.

Over time, I realized that context matters as much as creativity. A playful subject line might succeed during a holiday campaign but feel out of place in a formal announcement. A morning send time may work for one segment and not another. Testing becomes less about universal formulas and more about understanding patterns unique to your subscribers. It feels less like experimentation and more like a conversation that evolves.

The Lesson About Size and Patience

One insight I learned a bit later was that A/B testing isn’t only about creating two versions — it’s also about how many people see each version and how you decide the winner. Sending Version A to ten people and Version B to another ten might feel scientific, but it’s closer to guessing. With small samples, randomness speaks louder than preference.

As audience size grows, results stabilize. Waiting long enough for meaningful data — enough opens, clicks, or conversions — changes the quality of decisions. Some email platforms automatically calculate statistical confidence, but even without advanced math, the principle remains simple: don’t rush the conclusion. A strong signal takes time to rise above noise. Declaring a winner too early often means choosing speed over accuracy.

The Rhythm of Continuous Refinement

Over time, A/B testing introduced a healthy rhythm into email strategy. Instead of guessing which layout or headline might work, campaigns evolved through cycles of observation and adjustment. The process didn’t slow creativity; it supported it.

The key was restraint. Testing too many variables at once blurred insights, while focusing on one meaningful change clarified direction. Gradually, each campaign became a small lesson that informed the next. It wasn’t about chasing perfection; it was about building confidence through repeated learning.

What Stays With Me

A/B testing for email is less about competition between two versions and more about curiosity toward the audience. It transforms campaigns from one-way broadcasts into responsive dialogues. The practice doesn’t replace intuition or creativity; it refines them with feedback.

Looking back, what stands out isn’t which subject line “won,” but the mindset shift that followed. Emails stopped being static messages and became evolving experiences shaped by real interaction. In the end, A/B testing isn’t about finding a final answer — it’s about learning continuously, one thoughtful variation at a time.